Object Storage on JSC Cloud

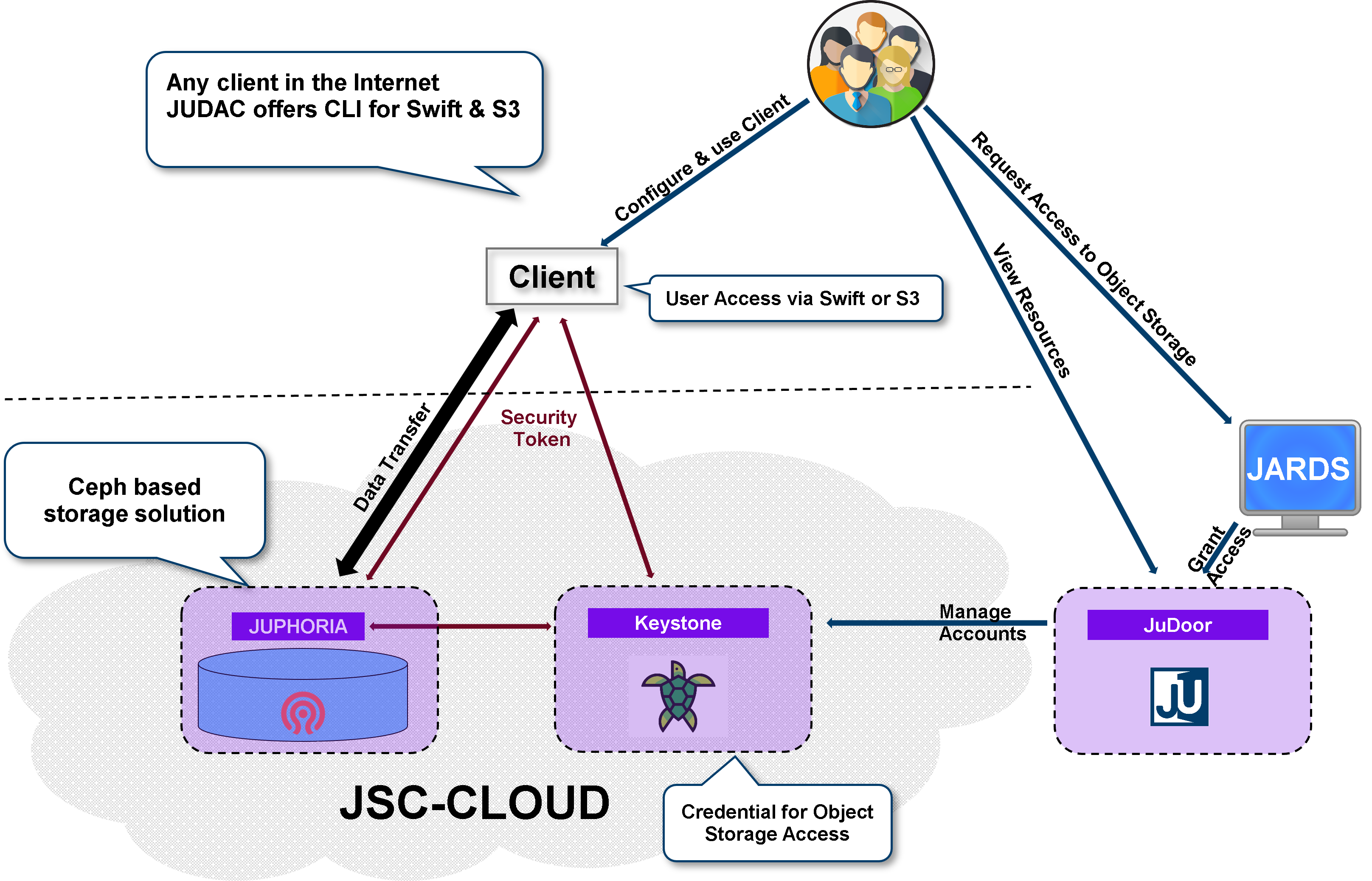

Beside classical block storage, the JSC Cloud offers a Ceph based object storage interface to store, manage, and access large amounts of data via HTTP. Data projects can request access to this resource via the JARDS portal.

Supported object storage access protocols are OpenStack Swift and S3.

Access endpoint for client authentication is an OpenStack Keystone instance at cloud.jsc.fz-juelich.de, which is connected to the JuDoor user database.

OpenStack Credentials Creation

Note

A user has to be granted access to a projects object storage resource first to get access.

You first need to create OpenStack credentials to access the object storage resource. This can be done by creating access credentials on our JSC Cloud web frontend JSC-CLOUD

Multiple access credentials can be created for different use cases.

Important

The secret is only available at creation time. Store it in a safe place.

Note

Credentials belong to a project. If a user has access to multiple object store projects, separate credentials must be created.

User Environment Preparation

Download file

app-cred-<credential name>-openrc.sh. Content is similar to:#!/usr/bin/env bash export OS_AUTH_TYPE=v3applicationcredential export OS_AUTH_URL=https://cloud.jsc.fz-juelich.de:5000 export OS_IDENTITY_API_VERSION=3 export OS_REGION_NAME="JSCCloud" export OS_INTERFACE=public export OS_APPLICATION_CREDENTIAL_ID=<credential ID> export OS_APPLICATION_CREDENTIAL_SECRET=<secret>

activate OpenStack environment variables by sourcing the file in your favorite shell:

$ source app-cred-<credential name>-openrc.sh

OpenStackClient CLI

openstack is a CLI for OpenStack that brings the command set for Compute, Identity, Image, Object Storage and Block Storage APIs together in a single shell with a uniform command structure.

Detailed information about usage of the OpenStack CLI can be found in the official OpenStackClient Documentation.

The CLI is provided by the python-openstackclient package, which also provides good Python bindings for programmatically accessing OpenStack APIs from scripts.

Useful Examples

list projects:

$ openstack project list

SWIFT Protocol - Manage objects and containers

Full documentation can be found in the official python-swiftclient Docs. Ceph supports a RESTful API, see Ceph Swift API.

S3 Protocol - Manage objects and containers

On the JUDAC login nodes the following opensource object store client CLIs are available:

S3cmd tool for Amazon Simple Storage Service (S3)

minIO client mc, a S3 compatible command line client

S3 Credentials creation

Use the OpenStack credentials created earlier to create EC2 credentials which are required for S3 access. First activate OpenStack environment variables by sourcing the file in your favorite shell:

$ source app-cred-<credential name>-openrc.sh

Generate access/secret pair with command:

$ openstack ec2 credential create +------------+----------------------------------------------------------------------------------------+ | Field | Value | +------------+----------------------------------------------------------------------------------------+ | access | <acccess key> | | links | {'self': 'https://cloud.jsc.fz-juelich.de:5000/.../<user identifier>/.../<access key>'}| | project_id | <project identifier> | | secret | <secret key> | | trust_id | None | | user_id | <user identifier> | +------------+----------------------------------------------------------------------------------------+list your available credentials

$ openstack ec2 credential list +--------------+--------------+----------------------+-------------------+ | Access | Secret | Project ID | User ID | +--------------+--------------+----------------------+-------------------+ | <access key> | <secret key> | <project identifier> | <user identifier> | +--------------+--------------+----------------------+-------------------+

S3 CLI Examples

Prepare

s3cmdcommand by setting up the configuration file (~/.s3cfg)[default] access_key=<access key> check_ssl_certificate = True check_ssl_hostname = True host_base = juphoria-s3.fz-juelich.de:443 host_bucket = juphoria-s3.fz-juelich.de:443 human_readable_sizes = True secret_key=<secret key>

s3cmdusage example:$ s3cmd mb s3://mycontainer Bucket 's3://mycontainer/' created $ s3cmd ls 2026-02-11 14:22 s3://mycontainer $ s3cmd put example.txt s3://mycontainer upload: 'example.txt' -> 's3://mycontainer/example.txt' [1 of 1] 20572 of 20572 100% in 0s 2.13 MB/s done $ s3cmd ls s3://mycontainer 2026-02-11 14:25 20K s3://mycontainer/example.txt $ s3cmd get s3://mycontainer/example.txt local_file.txt download: 's3://mycontainer/example.txt' -> 'local_file.txt' [1 of 1] 20572 of 20572 100% in 0s 161.74 KB/s done $ cmp local_file.txt example.txt && echo same file same file $ s3cmd rb --recursive s3://mycontainer WARNING: Bucket is not empty. Removing all the objects from it first. This may take some time... delete: 's3://mycontainer/example.txt' Bucket 's3://mycontainer/' removed

S3 API example

Ceph supports a RESTful API that is compatible with the basic data access model of the Amazon S3 API. Available implementations and supported S3 features are listed in the Ceph S3 API documentation.

One available SDK is the python library boto3 to access your data from python scripts:

import boto3 import botocore #boto3.set_stream_logger(name='botocore') # this enables debug tracing session = boto3.session.Session() s3_client = session.client( service_name='s3', aws_access_key_id="<access key>", aws_secret_access_key="<secret key>", endpoint_url="https://juphoria-s3.fz-juelich.de", ) buckets = s3_client.list_buckets() print('Existing buckets:') for bucket in buckets['Buckets']: print(f'Bucket: {bucket["Name"]}') objects = s3_client.list_objects(Bucket=f'{bucket["Name"]}') for object in objects["Contents"]: print(f'Object:{object["Key"]}')

Large objects

You need to pay special attention when uploading objects larger than 234 GiB. Uploads are by default done in chunks of 16 MiB each, with a server side limit of 15,000 chunks for an upload. As a user you can change the chunksize during upload, with a maximum supported chunksize of 5GiB.

In order to stay within the limit of 15,000 chunks, the chunk size must be at least the size of your object divided by 15,000, but only up to 5 GiB. Together, these result in a maximum object size of approximately 73 TiB.

For example, using a segment size of 1 GiB would allow you to upload up to 15 TiB:

$ s3cmd put --multipart-chunk-size-mb 1024 huge-file.dat s3://mycontainer/huge-file.dat

Please bear in mind that in case of interrupted transfers, entire segments must be re-transferred. Therefore, be careful when choosing the segment size for transfers, particularly for uploads over poor network connections.

For more information see: Boto3 Docs